A common feature for iOS applications is to be able to record, edit and upload user generated videos and then to display these videos in an infinitely scrolling feed. TikTok is one of the most popular apps to support a full featured video recording and editing experience, combined with an endlessly scrolling feed of newly uploaded content. Part of what makes the app engaging and addictive is that the video feed scrolls smoothly with a barely perceptible loading time for the videos as they scroll into view.

Unfortunately, achieving this experience is surprisingly difficult, especially if your development team doesn’t have much experience with video. It is hard to create a video backend at all - the details about video upload, storage, transcoding and delivery are notoriously tricky to get right. It is also challenging to achieve a smoothly scrolling infinite feed on iOS using just UIKit and AVFoundation. A naive implementation will result in noticeable lag, dropped frames and, often, a frozen UI. This post will cover the basics of how to create a TikTok-esque feed on iOS using the Mux Video backend, a lightweight Parse server deployed on Heroku, and AsyncDisplayKit and AVPlayer on the client.

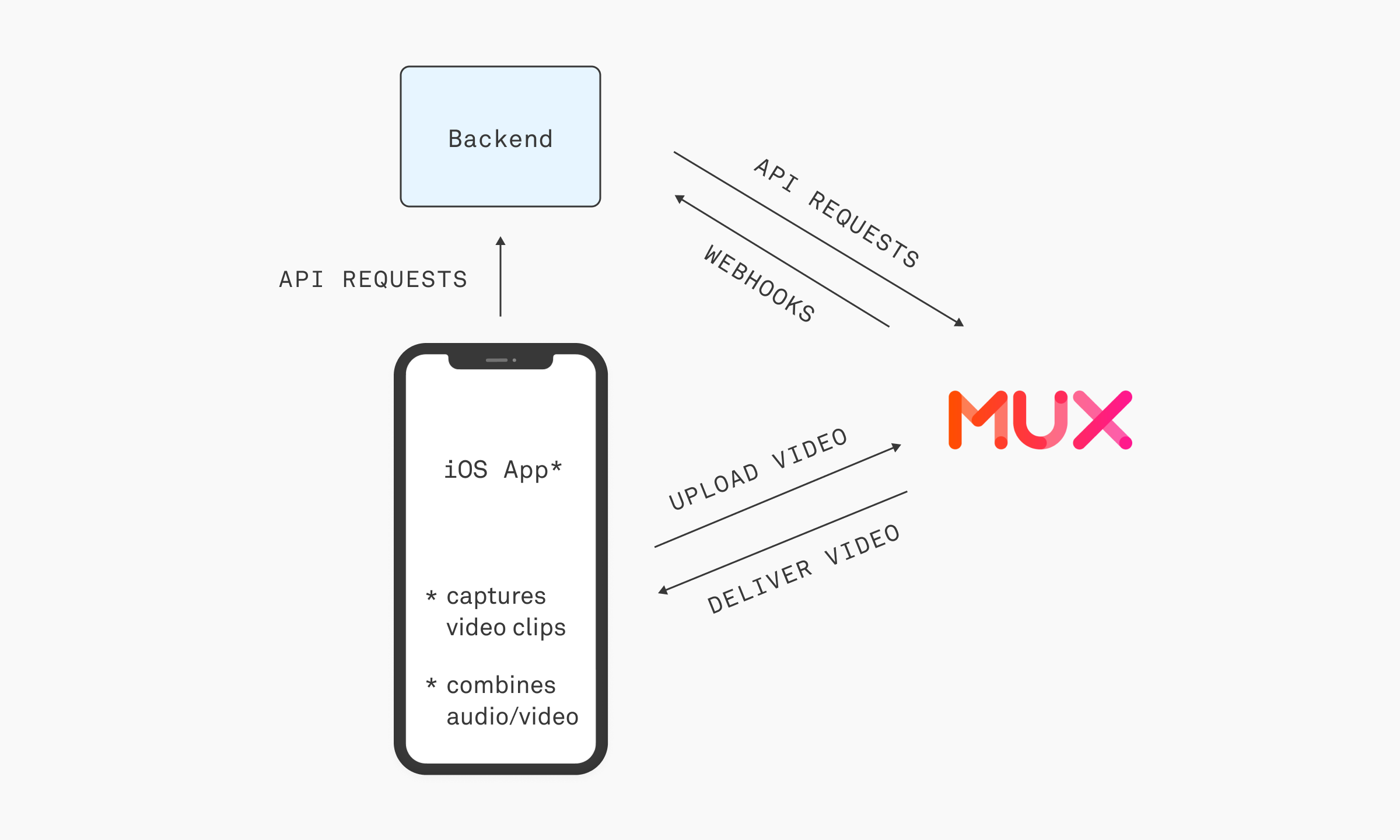

The full structure of our application will work like this. The iOS app will make a request to the backend, which will make one API request to Mux and receive webhook events from Mux. The iOS app will capture video clips and combine these video clips and audio into a single file that will be uploaded to Mux. Mux will handle the storage, transcoding and delivery of the finalized videos to all your users.

Backend with Mux Video

The backend of this project is a small piece. Our backend needs to be able to make one API requeststo Mux, receive webhooks from Mux's API, and save data about the ingested video assets to a database. You can use any backend you want for this part. In this example I use Parse and Heroku.

Start by creating a fork of the Parse server example here. We will be modifying this Parse example server to add two parts:

- An upload/ endpoint that will return an authenticated Mux direct upload URL used by the iOS client to upload the video.

- A webhook that will handle incoming status events from Mux Video about the ingested asset including when the asset is created in mux and when it is ready for playback.

Install the mux-node SDK

Follow the instructions here.

Require and initialize the SDK in cloud/main.js:

Create an API access token for Mux.

Save the MUX_TOKEN_ID and MUX_TOKEN_SECRET as Heroku config vars. These will be available to the Parse application as process.env.MUX_TOKEN_ID and process.env.MUX_TOKEN_SECRET

Upload

In cloud/main.js:

This function starts by generating a unique passthrough ID that will be used to identify the video asset once it is created in Mux. Then it creates a new upload URL using the Mux SDK, passing in the passthrough ID.

Finally, it persists this passthrough ID and upload ID as fields in a new Post.

You can optionally persist additional information about the asset such as author, tags, likes etc.

Webhook

Create the webhook in index.js:

Add the webhook handler in cloud/main.js:

This webhook handles two events, video.asset.created and video.asset.ready. It uses the passthrough id to query for the associated Post and updates its status to created or ready. This status is later used by the iOS client to query for videos that are ready to be displayed in the feed.

iOS

Uploading Video

The client uploads a video saved on disk by:

- Getting the direct upload URL from the upload/ endpoint

- Uploading the video file this URL

That's it! Mux will handle the storage, transcoding and delivery of this video for you.

Capturing Video

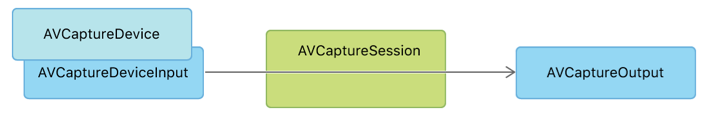

AVFoundation provides an architecture and components with which you can build your own custom camera UI that can capture video, photos, and audio. The main pieces of this architecture are inputs, outputs and capture sessions. Capture sessions connect one or more inputs to one or more outputs. Inputs are the media sources, such as your device camera or microphone. Outputs take the media from these inputs and convert them into data such such as movie files written to disk or raw pixel buffers.

https://developer.apple.com/documentation/avfoundation/cameras_and_media_capture

Custom Camera Interface Using AVCaptureSession

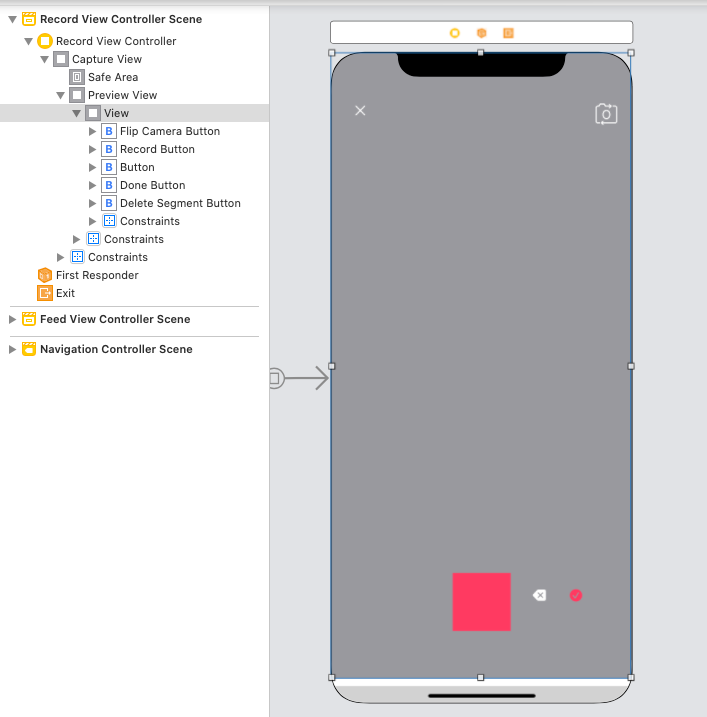

First, create a view controller for our custom camera UI called RecordViewController in your storyboard. It will be displayed modally, so it should have a cancel button at the top left. You can optionally add a button to flip the camera at the top right. At the bottom, we have a button to record video, delete a video segment and finalize the video. The TikTok app has many more controls than just these, but we will focus on these core actions.

Notice that we have added a Preview View that is a subview of the main UIView of this view controller. The view will be used to show a preview of the video being captured.

Hook up each IBOutlet and IBAction for these controls.

In addition to these controls, we have also added the main components of the capture system as properties of the view controller - AVCaptureSession, AVCaptureDeviceInput, AVCaptureVideoDataOutput and AVCaptureVideoPreviewLayer.

Configuring the Capture Session

When the view appears, we will set up a new capture session. When the view disappears we will stop our capture session. Our capture session will have two inputs - one for the camera and one for the microphone. It will have only one output.

Once we have configured the capture session, we set up the live preview of the capture and add it to our preview view.

Recording Video

Now we need to add the ability to start recording. To make the state of the UI easier to keep track of, we define an enum of the possible capture states. Our action for the record button simply changes the current _captureState.

In order to actually receive the captured video data, we set the RecordViewController as the video output's sampleBufferDelegate. This was done when first setting up the capture session output.

The callbacks containing the video frames will be invoked on the given queue.

AVCaptureVideoDataOutputSampleBufferDelegate

In order to be the sampleBufferDelegate, RecordViewController must implement the AVCaptureVideoDataOutputSampleBufferDelegate protocol. Inside the delegate method captureOutput(_:didOutput:from:) is where we handle the various capture states as they switch from idle → start → capturing → end

Every time the capture of a new clip starts, we want to

- Start playing the chosen audio track

- Create a new file on disk to store the video data for this clip

We will keep track of the filenames of each clip in a list. When the user finalizes the video by pressing the done button, we will merge these clips and the chosen audio track into one video file that will then be uploaded directly to Mux.

To do this, we need to add some additional properties to the RecordViewController

Now we can implement the rest of captureOutput(_:didOutput:from:)

The user can now start and stop the record button multiple times to record several video clips in a row.

Finalize the Video

When the user presses the done button we will pass all of the clips to our VideoCompositionWriter class (details on that below). This service will merge all the clips together with the audio and upload to Mux.

Merging Audio and Video with AVMutableComposition

Apple provides the AVComposition class to help us combine media data from several different files. We will use a particular subclass of AVComposition called AVMutableComposition to combine all of the video clips captured by the RecordViewController with a given audio track.

Now that we have capture and upload of our video working, we can move onto actually displaying these videos in an infinitely scrolling feed.

Displaying Video with AsyncDisplayKit

Don’t block the main thread

The cardinal rule of building iOS applications is to avoid blocking the main thread. The main thread responds to user interactions - so any expensive operation that blocks the main thread will result in a frozen UI and a frustrating, glitchy user experience. This is particularly true for UIs such as the infinite scroll since it is reliant on continuous touch gestures and ideally incorporates interactive animations.

A video feed like TikTok’s contains complex UIs consisting of video, images, animations and text. Handling the measurement, layout and rendering of all these UI components becomes cumulatively expensive. In a scrolling view, especially one that is also playing video, this overhead will usually result in dropped frames.

AsyncDisplayKit was developed by Facebook for its Paper app. Its major design goal is to provide a framework that allows developers to move these expensive operations off the main thread. It also provides a convenient developer API for building infinitely scrolling UIs that need to periodically load new data.

Major Concepts of AsyncDisplayKit

Node

The basic unit of AsyncDisplayKit is a Node. A Node can be used just like a UIView. It is responsible for managing the content for a rectangular area on the screen.

Unlike a UIView, a node performs all of its layout and display off of the main thread. Some Nodes such as ASNetworkImageNode also provide much more functionality than their UIKit analogue. Unlike a UIImageView, an ASNetworkImageNode can even handle asynchronously loading and caching images for you.

NodeContainer

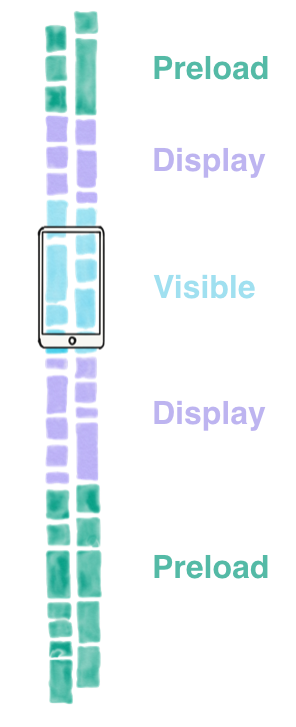

The responsibility of a NodeContainer is to manage the interfaceState of any Node that is added to it. In a scrolling view, the interfaceState of a Node might move from Preload → Display → Visible.

https://texturegroup.org/docs/intelligent-preloading.html

One of the reasons using AsyncDisplayKit gives us such a big performance win is that we can intelligently carry out tasks required to display a Node depending on its interfaceState - or how far away it is from being visible on screen.

For example, when a Node is in the Preload state, content can be gathered from an external API or the disk. When in the Display state, we can carry out tasks such as image decoding an image preparation for when the Node moves on screen and becomes visible.

Layout Engine

Finally, we have the layout engine. It was conceived as a faster alternative to Auto Layout. While the Node and the NodeContainer take care of displaying their content on a portion of the screen and all of the tasks required to display such content, the layout engine handles calculating the actual size of a Node and the positions and sizes of all of its subnodes. For example, you could define a layout specification where a node displaying text is positioned on top of a node displaying an image.

Documentation

See https://texturegroup.org/docs/getting-started.html

Using AsyncDisplayKit to Create a Video Feed

PostNode

Let’s start by defining the node used to display each video in the feed, called PostNode:

This node contains

- A reference to the Post that it is displaying

- An ASNetworkImage subnode that will display the thumbnail of the video as its background

- An ASVideoNode subnode used to play the HLS video

- A custom subnode - GradientNode - used to display a gradient over the node to make text and other post details easier to see.

We use a combination of ASRatioLayoutSpec and ASOverlayLayoutSpec to define the layout of the Post node. In this case we declare that the video subnode should have the same ratio as the screen (since it will be displayed full screen) and that the gradient will be placed over the video.

We use a ratio spec to provide an intrinsic size for ASVideoNode, since it does not have an intrinsic size until we fetch the content from the server.

Finally, we use the playback id that is sent to us in the asset information we received from the Mux webhook to construct both the thumbnail and streaming video URL.

FeedViewController

FeedViewController displays a scrolling list of PostNodes. If we were using UIKit, FeedViewController would be the UITableViewController. Since we are using AsyncDisplayKit, it is instead a UIViewController that contains an ASTableNode (rather than a UITableView).

It implements ASTableDataSource, which should be familiar to anyone having implemented UIKit’s UITableViewDataSource:

It also implements ASTableViewDelegate, which is a parallel to UIKit’s UITableViewDelegate:

Fetching new content is done by implementing ASTableViewDelegate’s shouldBatchFetch:tableNode

In willBeginBatchFetchWith:context, we make a call to the Parse server to retrieve posts that are ready for playback. We insert these rows into the table node and finally call completeBatchFetching on the ASBatchContext.

Conclusion

It turns out that building Tiktok is not easy! In fact, we have found that building highly interactive, user friendly iOS apps with feeds of video is quite challenging. Users today expect videos to load fast— and if a video stalls or buffers they will quickly keep scrolling or close your app. Mux provides the APIs for handling user uploads and optimizing video delivery, while your application can use the methods described in this post to build a capture and display experience that engages your users.

You can find all the code on Github here. If you're building a cool app with video please get in touch with us @muxinc, we would love to talk!

Resources

The following tutorials and resources were immensely helpful when piecing together the different components for our iOS client.