Transport Layer Security (TLS) is essential to commerce, privacy, and trust on the Internet. A network connection secured by TLS is signified by that little padlock in your browser address bar, and the big scary message from your browser when you visit a site that doesn’t support TLS or is using an untrusted certificate. Like any strong identification system, be it in-real-life or on the Internet, TLS certificates eventually expire and must be renewed. A good certificate management scheme will issue certificates that are valid for only a few weeks; this necessitates a mechanism for automatic certificate renewal.

This presents a problem: How can you be certain your servers are successfully renewing their certificates?

To address this issue, Mux created an open-source certificate-expiry-monitor tool that uses the Kubernetes API to discover servers that make use of TLS certificates and emit Prometheus metrics with the expiration times for certificates installed on each server. This makes it easy to alert on servers that are not renewing their certificates so we can intervene before the certificate expires.

First, let’s look at the incident that prompted us to create the certificate expiry monitor.

Where There’s Smoke, There’s Fire

On December 10th, 2018 at 22:46 UTC we began to see intermittent smoke-test failures for the Mux Video service. Some of our smoke-tests simulate video playback by retrieving HTTP Live Streaming (HLS) manifests and video segments for existing video assets.

The failed smoke-tests reported an HTTP 503 status code while retrieving the HLS master manifest. An HTTP 503 status code indicates the service is unavailable, and in practice can mean all manner of things; they should happen rarely, if ever. Each time we were paged, the smoke-test would clear a minute later and everything appeared fine. All internal metrics looked fine. We saw no HTTP 503 response codes from our origin services.

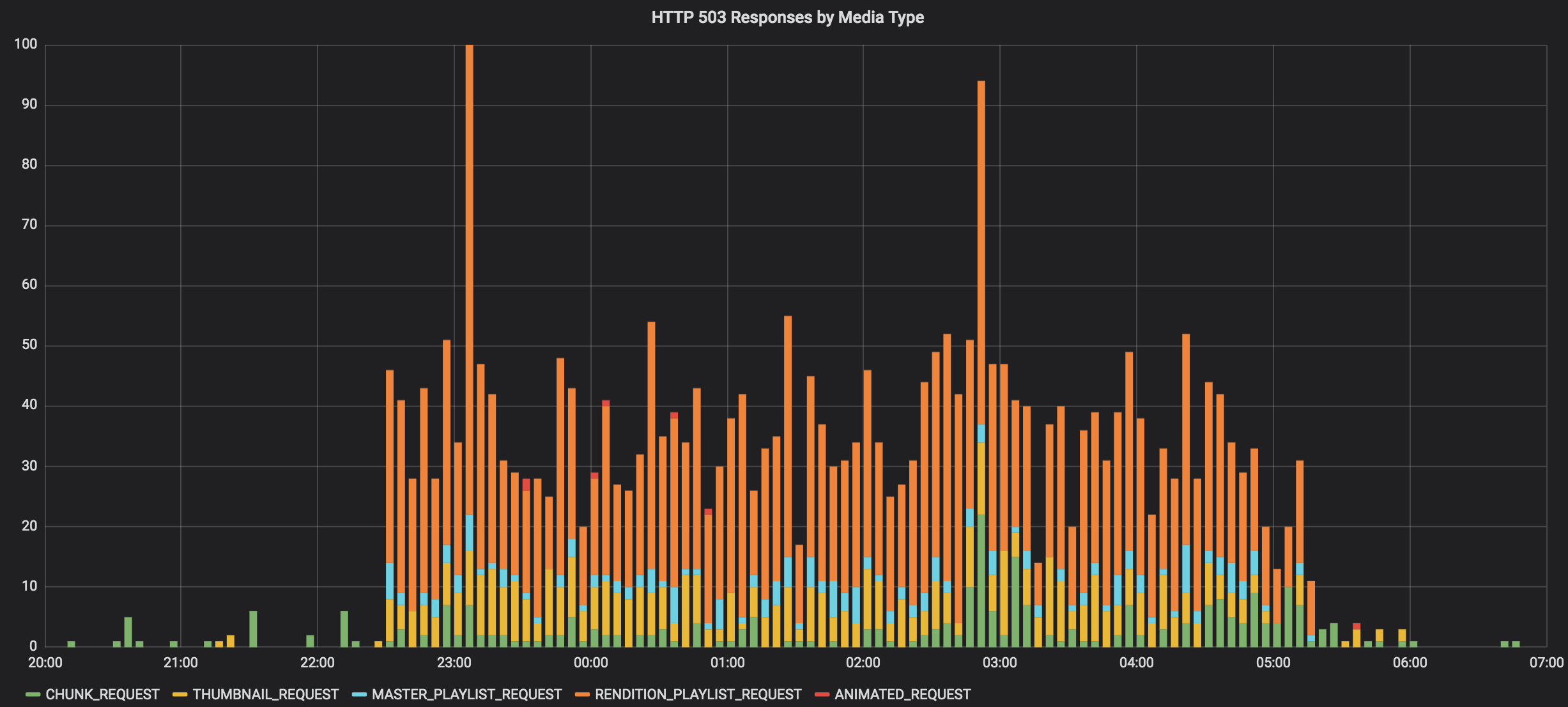

The frequency of the smoke-test failures seemed to be increasing. It also was affecting more than just manifests; any media request to our origin was affected - be it manifests, thumbnails, video chunks, or GIFs. The following chart shows HTTP 503s by media-type from our CDN access logs over the course of the incident:

At this point, things had clearly gone sideways. Anyone receiving an HTTP 503 when requesting manifests or video segments might experience stalled or failed video playback. Some manual testing revealed that our CDN was reporting the origin TLS certificates as expired. We updated service status and began investigating.

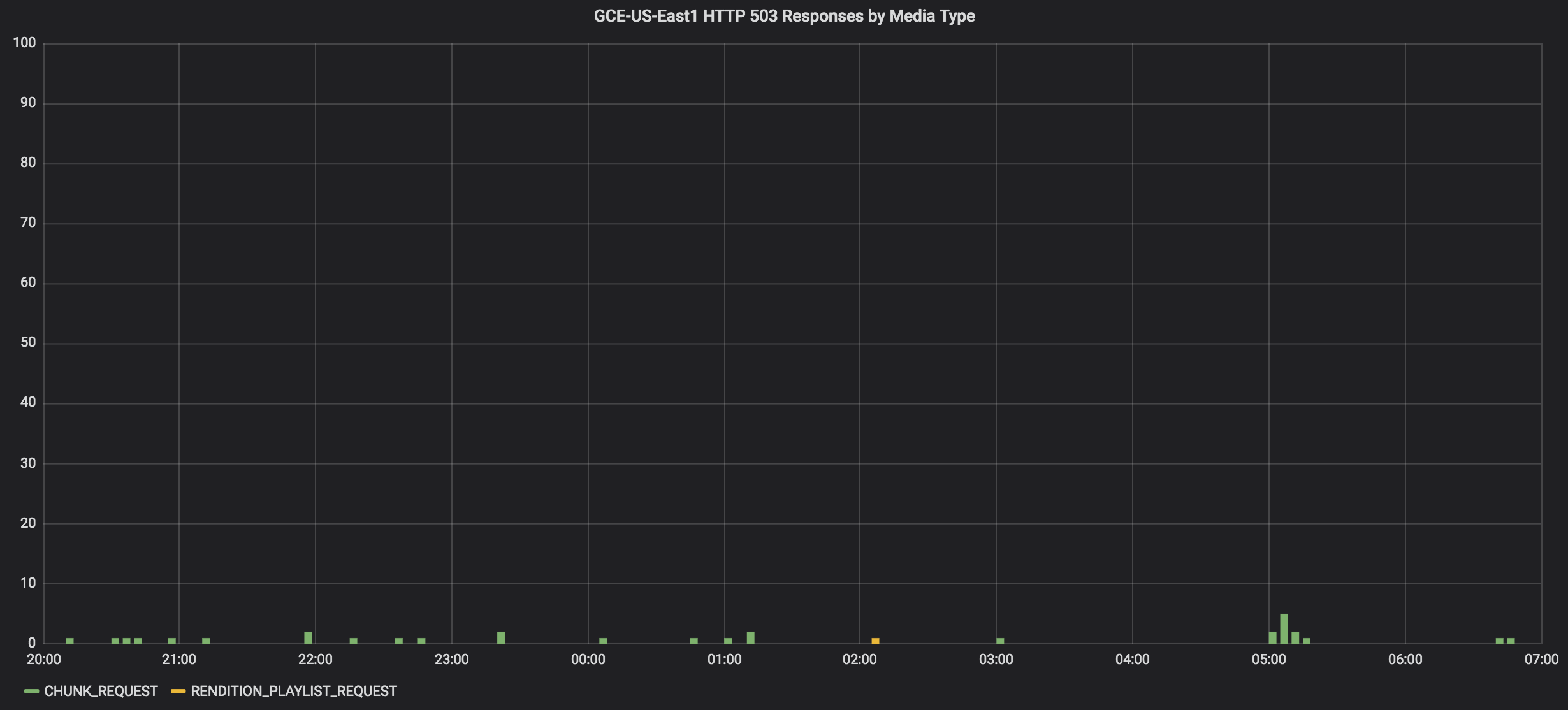

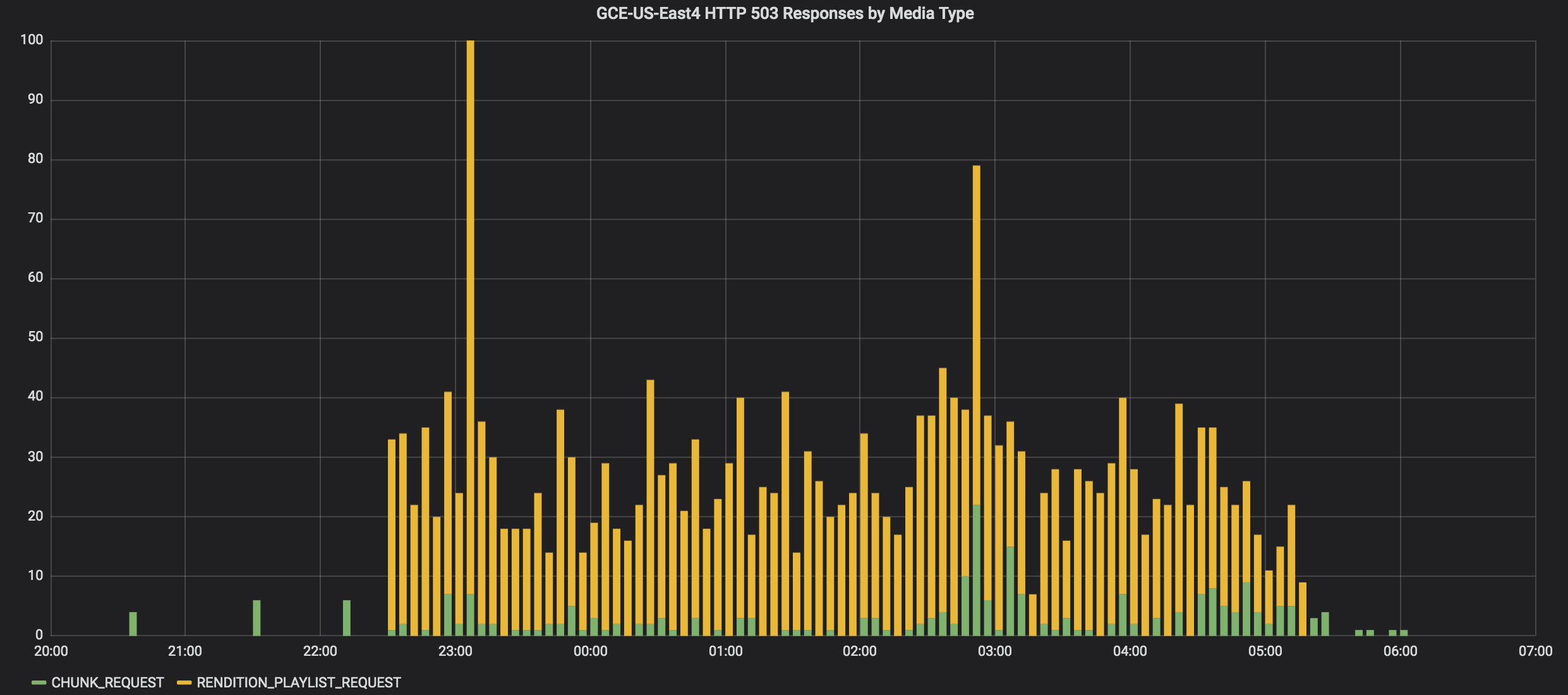

We operate Mux Video Kubernetes clusters in two GCE data-centers. Comparing the rate of 503 responses across the data-centers, the bulk of the errors were coming from the GCE US-East4 cluster:

Listing the expiration dates for the Traefik servers in the GCE US-East4 cluster revealed that one of the servers was using an expired TLS certificate. The Google TCP Load Balancer delegated TLS termination to the Traefik server and distributed new connections in a round-robin fashion among the Traefik servers. Any new connection sent to the Traefik server with the expired certificate would fail TLS negotiation – all without any indication of a problem in the Traefik access logs. This was remedied in the short-term by replacing the problematic Traefik pod.

We desperately needed a way to automatically identify Traefik servers that were no longer renewing certificates.

The root-cause proved to be Traefik web servers that failed to renew their certificates automatically because of stale ACME locks in the Consul key-store. This was due to a Traefik bug (https://github.com/containous/traefik/issues/2671) that remains open and unresolved at the time of this post.

Build or Buy?

There are lots of TLS certificate monitoring services on the Internet. Most work by periodically opening a connection to a URL of your choosing. They’ll compare the expiration date of the remote server certificate against some alerting threshold, and take some action such as invoking a webhook or sending an email when the threshold has been exceeded.

A problem with this approach is that requests from a TLS certificate monitoring service might be round-robin assigned to only those servers with valid TLS certificates, despite there being a server with an invalid certificate in the pool.

Additionally, it'd be useful to monitor the expiration of TLS certificates used to secure internal communications. This is difficult or impossible to achieve with services designed to monitor publicly-accessible TLS endpoints.

We needed to directly monitor the certificates for all servers in the pool.

We use Prometheus and Grafana to collect, graph, and alert upon critical infrastructure. It would be nice to rely on those same systems to alert and diagnose certificate expiry problems rather than use a third-party hosted tool.

Let’s Build It!

Most Mux services are written in Go, so it only made sense for us to build the certificate-expiry-monitor in Go, too. This also simplifies use of the Kubernetes APIs to query for monitoring targets.

The certificate-expiry-monitor is configured with the following key options:

- Polling interval

- Kubernetes namespace to query

- Kubernetes labels to query for within a namespace

- Domains (e.g. foobar.example.com) to monitor

The monitor will periodically query Kubernetes to find all pods within a namespace that match a set of labels. It then iterates over the domains to monitor and ensures that each domain has a certificate that is present and valid. Prometheus metrics are generated for each pod + domain combination, indicating the time-to-expiry, time-since-issued, and whether the certificate is:

- Expired

- Not yet valid (valid-after is in the future)

- Not found

- Valid

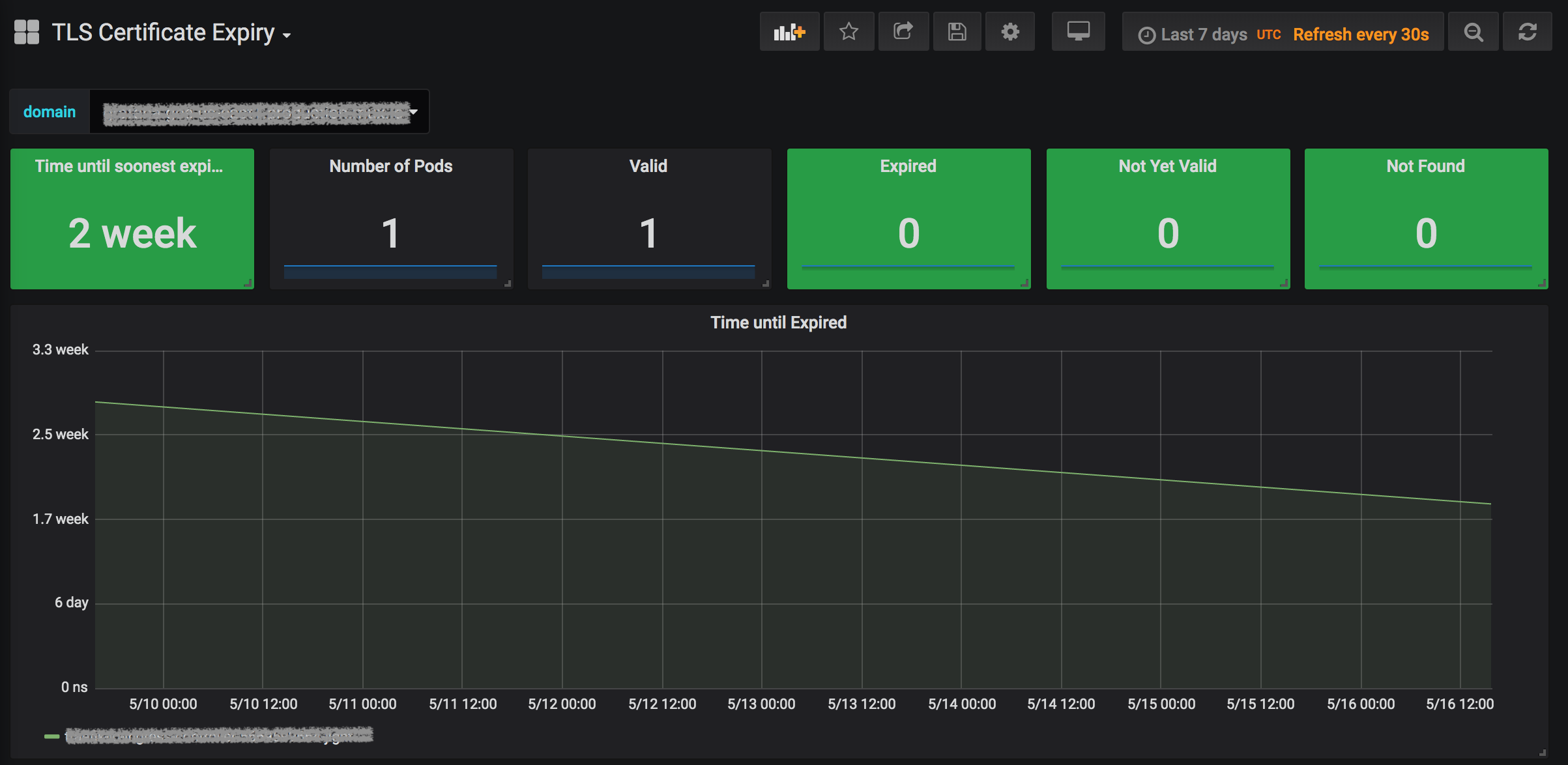

We’ve configured the certificate-expiry-monitor deployment to be scraped by Prometheus, making the metrics available for charting and alerting. We created a nice Grafana dashboard for at-a-glance monitoring of the pods configured with certificates for a given domain:

And since nobody likes staring at dashboards to make sure things are still functioning, we created Prometheus alerting rules to warn us when certificates are nearing expiry, and scream loudly when they’ve expired or are missing:

Conclusion

It is essential to monitor the expiration of TLS certificates to ensure service availability. Since building and deploying the certificate-expiry-monitor, we’re confident that the TLS certificates on our web servers are valid and being automatically renewed. We hope that others will find this tool useful, too. We love pull-requests from the community!