Some technologies and plans are developed to address the worst-case scenario. If everything continues to operate normally, then they'll never be put into action, and that's a good thing. One example is Mux Video's automatic response to network failures when performing dynamic CDN selection at the start of each video playback.

On June 24th at 10:30 UTC, Verizon made an erroneous update to their Border-Gateway-Protocol (BGP) routing announcements from a major Internet interchange (AS701) that made a small ISP in Pennsylvania the preferred path for much of the Internet traffic in the Northeastern United States. This led to significant network congestion and errors, resulting in degraded performance for some of the largest CDNs (Cloudflare, Fastly) and ISPs (Linode, Amazon), and in turn affecting the websites and services they host.

This post will explain how Mux Video performed during the incident and how dynamic CDN selection can help reduce the impact of network outages.

How CDN Switching Works

Mux Video uses Citrix ITM to identify the optimal CDN each time a viewer initiates video playback. ITM relies on Citrix Radar data and Mux Data metrics gathered from hundreds of millions of users around the world to build a picture of how the Internet is performing across a variety of dimensions (CDN, network, geography, etc).

Our ITM setup identifies the network associated with the IP address of each video viewer and selects the optimal CDN for that network based on round-trip-time (RTT) performance data gathered by Radar.

An increase in network congestion or errors is represented by an increase in RTT measurements. If the RTT for the preferred CDN rises to the point that it's no longer favorable, then Citrix ITM will begin recommending a different CDN from the set of supported CDNs. We currently use the Fastly and Stackpath/Highwinds CDNs, and are in the process of adding more.

CDN switching is automatic and driven entirely by changes in Radar data. This has the benefit of reducing congestion on an already congested path, and providing a quicker path for those taking the alternate route.

How Did Mux Video Perform?

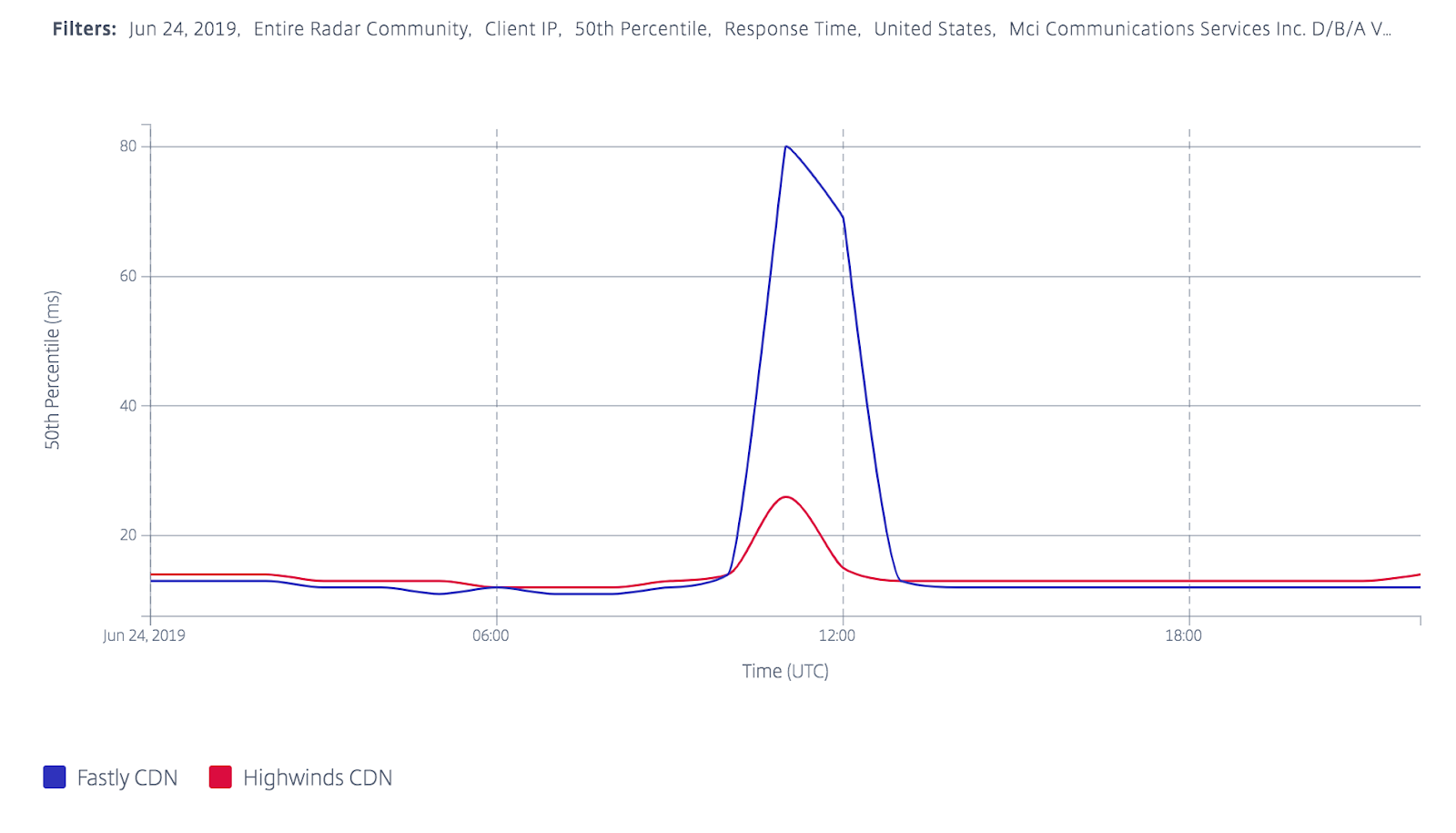

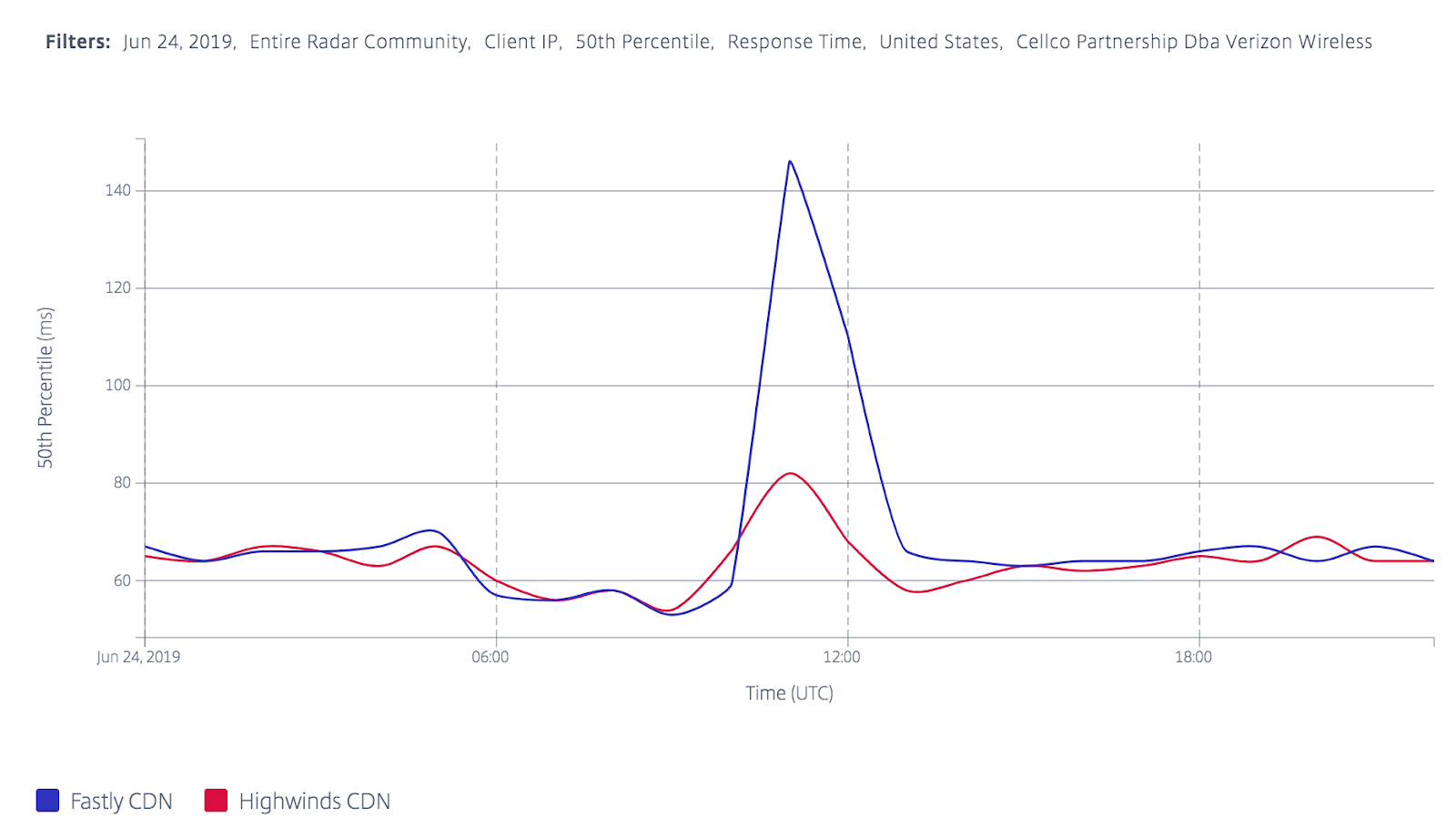

During the outage, RTT performance on AS701 for the Fastly CDN spiked from 11ms to 80ms; meanwhile, RTT performance for the Stackpath/Highwinds CDN rose only moderately from 12ms to 26ms. We also observed disruptions on another Verizon-managed ASN, AS22394.

Citrix RTT Data for ASN 701

Citrix RTT Data for ASN 22394

Citrix ITM began directing Mux Video traffic from the AS701 and AS22394 networks to the Stackpath/Highwinds CDN at 11:00 UTC. We currently use a Fastly origin shield in front of our media origin, so there's a slight handicap applied to Stackpath/Highwinds to make use of cost and performance advantages to serving from Fastly. However, in this case the handicap was superseded by the poor RTT times on the Fastly network in these ASNs during the incident. Stackpath/Highwinds still requested the media through our Fastly origin shield residing in a Fastly point-of-presence (POP) near our origin.

The following charts show Mux Video traffic distributed by CDN during the incident. Note the significant swing in traffic from Fastly (green) to Stackpath/Highwinds (yellow) between 11:00 - 16:00 UTC:

Video delivered by CDN for AS701 and AS22394

Let's highlight some of the wins here:

- The cutover from Fastly to Highwinds was automatic and limited to the affected networks & geographies

- All traffic through Stackpath/Highwinds still made use of our Fastly origin-shield, meaning there wasn't an increase in origin egress traffic as a result of the switch

Conclusion

Mux Video was able to identify and respond to the Verizon BGP routing incident quickly and automatically. We remain committed to delivering the best viewing experience, even under the most challenging conditions.

We encourage you to create a free account to see how Mux Video makes reliable video streaming easy!

If you want to learn more about how ITM and Radar handled this issue, check out the post from Citrix.