Authors Notes: This article is based on a set of experiments I developed while investigating the feasibility of building a premium video experience using only royalty free video streaming technologies, which became the basis of talks I gave at FOSDEM and SFVideo. At Mux, we use whatever technologies allow our customers to reach the most users, which today, unfortunately, means a heavy emphasis on patent-encumbered technologies. However, we are actively investing in royalty free technologies to make video streaming on the internet better for everyone.

The foundation of the internet was built upon open technologies, not restricted by patents, yet when we look at video on the internet, we see a different story. Overwhelmingly, online video relies at some point in the chain upon patent encumbered technologies, mostly those developed and licensed by the MPEG group.

With the recent prominence of the royalty free AV1 video codec, are we finally going to see a tipping point where we can stream video online without being restricted by patented software?

Maybe. But there’s a lot more to an online video streaming ecosystem than just a video codec.

Objective

The challenge I set myself was to build a premium video streaming experience, which could reach as many browser-based viewers as possible, using only “libre” video technologies. Of course the first question you’ll ask will be “well what do you mean by libre”. For me, libre means the following two things, in order of importance.

1. The technologies we use should not be restricted by software patents.

But why does using a patented technology matter? Money and risk. Using technologies such as MPEG’s H.264 (AVC) and AAC require the payment of licensing fees to the MPEG licensing authority. The potential magnitude of these fees has grown in recent years with the well documented uncertainty surrounding the HEVC (H.265) licensing situation. This, coupled with the complicated licensing and patent ecosystem for MPEG DASH, is driving people to look at alternate options.

One of the biggest challenges with licensing these patented technologies is understanding exactly who in the delivery chain should be paying the license fee, and exactly when the licensing fees kick in. This can be complicated to understand, and I’m not going to attempt to give my interpretations here (IANAL).

2. The software technologies we use should be developed transparently, in the open.

While the second is not an absolute necessity to me, I like working in ecosystems where I can make a difference, work with others to understand their requirements, and collectively improve the standards and software that we all use as an industry.

Components

While we’re thinking about how to structure our theoretical delivery technology stack, we have to think about several critical areas where we can make use of libre technologies:

I’m not going to spend too much time talking about encoders as it’s well appreciated that one of the most flexible video encoders in existence is a cornerstone of libre software.

Codecs

The most important decision we have to make in our streaming video stack is our video and audio codecs, as this really defines the effective reach of our system. As we’ll discuss, support for open video and audio codes is variable, but workarounds are available for some of the gaps we’ll identify.

Let’s get started by breaking down the common video and audio codecs we see on the internet today into 2 groups, the libre friendly codecs, and the patent encumbered codecs. Next, we’ll test those groups looking at what combinations were meaningfully usable within modern internet browsers.

Libre Video Codecs

VP8

VP8 is a royalty free codec developed by On2, (acquired by Google) with roughly the same computational complexity as as H.264. VP8 is not widely used for video on demand anymore due to the prevalence of H.264 in modern browsers, however, it has seen a recent resurgence in usage as the dominant video codec of choice within WebRTC.

VP9

VP9 is a royalty free codec developed by Google, it is the successor to VP8 demonstrating higher compression ratios and visual quality. It is fairly computationally complex to encode, but hardware accelerated solutions are available. Hardware accelerated VP9 decoders are built into many modern browsers and devices. VP9 is widely used in sites like Youtube, Netflix, Facebook, and Twitch. These sites tend to use VP9 alongside a traditional MPEG oriented delivery chain to provide fallback experiences for users in browsers and on devices that can’t support VP9.

AV1

AV1 is a royalty free codec developed by the Alliance for Open Media (AOM). AV1 started life as the VP10 codec, designed as a replacement for VP9, but Google decided instead to donate the work to the AOM foundation, where alongside features from Cisco’s Thor and Mozilla’s Daala codecs, it became AV1. AV1 is very computationally expensive to encode and decode, and is not realistically ready for major deployments on the internet due to limited browser support and disproportionately high cost to encode content.

Patent Encumbered Video Codecs

AVC (H.264)

Advanced Video Coding (AVC) is a video codec developed by MPEG. It is the most commonly supported video codec on the planet and is available in every major browser and device. It is computationally cheap to encode and decode. As AVC was developed by MPEG, royalties are collected via the MPEG-LA group for its usage.

HEVC (H.265)

High Efficiency Video Coding (HEVC) is a video codec developed by MPEG. It is the successor to the popular AVC (H.264) codec, providing improved compression ratios, particularly at resolutions above 1080p. Decoders for HEVC are mostly found in smart TVs, set-top Boxes, and iOS devices, as such HEVC is commonly used to deliver high value UHD content. It is computationally expensive to encode and decode. HEVC is currently unavailable in any desktop browsers.

Libre Audio Codecs

Vorbis

Vorbis is a royalty free audio codec developed by Xiph.Org. It is commonly used alongside the VP8 video codec to deliver a royalty free streaming solution. Vorbis has been superseded by Opus.

Opus

Opus is a royalty free audio codec developed by Xiph.Org. It is commonly used alongside the VP9 video codec to deliver a royalty free streaming solution.

Patent Encumbered Audio Codecs

Advanced Audio Coding (AAC)

Advanced Audio Coding (AAC) is a commonly used audio compression codec developed by MPEG. It is the most commonly supported audio codec and is available in every major browser and device.

Dolby Digital (AC3)

Dolby Digital is an audio codec developed by Dolby Laboratories. Also known as AC-3. It's commonly available in living room devices but usually not in web browsers or mobile devices.

Dolby Digital Plus (eAC3)

Dolby Digital Plus is an audio codec developed by Dolby Laboratories. Also known as Enhanced AC-3 or eAC-3. Supersedes Dolby Digital providing higher quality, more surround channels, and lower bitrates.

Codec Selection & Testing

From the codecs I described above, I selected a representative set to use as a test case which we can run across multiple browsers on desktop and mobile browser ecosystems. The codecs and containers I selected were as follows:

AVC (H.264) and AAC in an MP4 container

Picked as a baseline test - we’d expect this combination to play almost everywhere.

VP8 and Vorbis in a WebM container

Picked as the simplest combination of libre video and audio codecs.

VP9 and Opus in a WebM container

Picked as the higher compression performance combination of libre video and audio codecs.

The tests work by loading a simple <video> element for each source, which sets the input to a statically hosted short video clip, with all required CORS setup being configured correctly. muted and autoplay are set, along with playsinline to simplify testing so that we can verify playback trivially on page load.

You can run the tests yourself in your browser by following this link in different browsers. The source is available here on Github. The test browsers we’ll be using are the current set of “evergreen” browsers - that’s Chrome, Firefox, Edge, and Safari.

Authors Note: I acknowledge the non-trivial usage of Internet Explorer and UC Browser. At the moment, I’m not focusing on those platforms, but I’m interested in exploring these markets at a later date.

Codec Testing - Desktop

Codec testing on evergreen desktop browsers.

Out the box, we actually do fairly well on this test. As I’d expect, we have our MP4 playing on all browsers, but more importantly, we observe VP8 and VP9 playing across 3/4 of our test cases. To me at least, this isn’t bad for a first experiment. The evergreen browser that gives us issues is Safari. To understand if this is a big problem or not, let’s look at some coverage statistics for the big 4 browsers on desktop.

December’s Statcounter results put desktop browser usage as follows:

- Chrome: 71%

- Firefox: 10%

- Safari: 5%

- Edge: 4%

So when you add that up, that means that just using VP8 or VP8 with Opus/Vorbis in a WebM container gives us around 85% browser coverage. By my math, the MP4 combination should work in over 95% of desktop browsers. To me, being within 10% of the patent-encumbered experience as a first pass is pretty impressive, but we can certainly do better! But first, let’s take a look at the state of play on mobile.

Codec Testing - Mobile

While we’re at 85% browser coverage, this is pretty meaningless, because by every measure the majority of internet browser traffic is mobile. Let’s repeat our tests again on the evergreen browsers on mobile (Chrome for iOS and Android, Safari on iOS).

Codec testing on evergreen mobile browsers.

Here we see a very different situation. Our MP4 continues to play everywhere, but we get no playback of our libre video codecs on any iOS platform. This is a fairly big problem - it means that we have no coverage on the most popular phone and tablet brand on the planet. Looking at Statcounter again for mobile devices, the problem looks even more acute:

- Android Chrome: 41%

- iOS Chrome: 14%

- iOS Safari: 23%

Statcounter puts our combined mobile coverage at 41%, which is fairly bad, especially when by my estimates, our MP4 file is playing on over 90% of mobile devices. So is there anything we can do to increase our coverage on Safari across desktop and mobile, as well as Chrome on iOS?

Yes, we can use polyfills.

Polyfills

Polyfills are a great innovation within the video playback space. Polyfills are used to decode and render VP8, VP9, and AV1 client side in the browser using Javascript (or in some cases Web Assembly, WASM) via a combination of the <canvas> element and the Web Audio APIs. One of the best examples of this technology is OGV.js, which was developed to allow videos uploaded to Wikipedia to play on more browsers (Wikipedia only ingests and delivers using “free” video codecs and containers for video content).

So hopefully, OGV.js can help us deliver on some of the devices we can’t target with our libre codecs. Again, I’ve put together a test harness (you can try it here, source here), but this time adding in OGV.js as a polyfill, which we can use to test playback on our problematic browsers.

Testing OGV.js on problematic browsers.

Great! This solves a lot of our challenges, we now have playback working on Safari Desktop, as well as Chrome and Safari on iOS. What’s more, OGV.js also works on later versions of Internet Explorer too, giving us very comprehensive desktop coverage.

Of course using polyfills for playback has its drawbacks. The most obvious is that decoding and rendering video client side in Javascript (or WASM if you’re lucky) is CPU heavy - and with high CPU usage comes high temperatures and high battery usage. Some of the other drawbacks include not having a native playback experience meaning you’ll probably be spending a good amount of time working on your interface and styling.

Containers

I’m not going to spend much time on containers - containers and codecs tend to come in pairs, and the supported combinations tend to be fairly limited. There’s only really one libre container that can work well with the libre codecs which we’ve identified, and that’s WebM. WebM is a derivative of the Matroska file format (.mkv) you might have encountered before. All the experiments we performed above were performed using the WebM container.

Delivery Technologies

Now, just because we’ve got video playing in the browsers we care about doesn’t mean the job is done. I explicitly set out at the start of this experiment to create a technology stack which could provide premium video experiences on a par with those using patent encumbered technologies.

Most video delivered online today uses Adaptive Bitrate Technology (ABR). ABR is a technology which selects between different copies of your content encoded at different bitrates to cope with changing network conditions without sacrificing the end user experience.

In general, this technology is implemented by encoding content at multiple bitrates and then by segmenting the resulting file into chunks between 2 and 10 seconds. This allows the player to request individual segments of the content, and to measure the network performance as it downloads them in order to make educated decisions about what rendition might be the best one to use when one is next needed.

HLS and DASH

Today, there’s two prominent ABR technologies in use, HLS and DASH.

DASH (Dynamic Adaptive Streaming over HTTP) is an implementation of an adaptive bitrate streaming technology designed by MPEG. It uses a single XML-based manifest file to describe the available renditions, usually delivered with the .mpd file extension.

DASH has been the go-to solution for delivering libre video formats for a while, with use by platforms such as Youtube, Netflix, and others. Even the current AV1 encoded test streams from Bitmovin are described in a MPEG DASH manifest. However, with the announcement of the declared patent pool from the MPEG Licensing Authority (MPEG-LA), some organizations are starting to move away from DASH.

HLS (HTTP Live Streaming) is an implementation of an adaptive bitrate streaming technology designed and maintained by Roger Pantos at Apple. While HLS is not patent encumbered (as far as I’ve been able to establish), HLS falls foul to my second criteria for libre software. HLS is developed in a closed environment with little input from the larger community. There are communities building extensions on top of the HLS specification (for example LHLS), but it is unlikely that these become formally standardized inside the official HLS specification.

In order to support libre codecs and containers well, we could consider developing another extension to HLS in the open which would formalize the support for libre codecs contained in a WebM container, providing an ABR technology which could be leveraged for libre solutions.

While we’re continuing to see the increase in the deployment of libre codecs on the internet, a lot of those deployments are still using the patented DASH manifest format, or relying on proprietary 3rd party ABR technologies. To me, it’s obvious that we need a libre ABR technology, especially when we’re finally seeing the rise of libre video codecs in browsers, namely AV1.

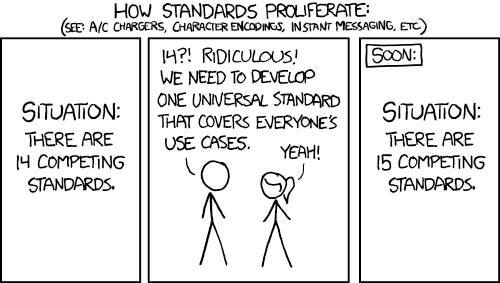

SASH

About 4 years ago, Jon Dahl (our co-founder and CEO) and I were lamenting some design decisions born of implementing MPEG DASH over a beer at the San Francisco Video meetup. We devised a cunning plan to improve the situation. A new standard for ABR delivery.

Standards. https://xkcd.com/927/

SASH (Simple Adaptive Streaming over HTTP) is a protocol designed to learn from the experiences of deploying HLS and DASH at scale and improve on their design decisions, improving simplicity and readability, while removing ambiguity for player implementations. SASH is designed to be interchangeable with most media generated for HLS or DASH delivery.

At the start of 2019 while preparing this talk for FOSDEM, I dusted SASH off again as my research had highlighted the lack of a libre ABR technology. Since then I’ve spent time reviewing the decisions I made 4 years ago and improving the design to cater for more use cases.

SASH uses a simple JSON manifest which describes the ABR set. Take a look at the example below:

One of the big changes I’ve been looking at this year is support for discontinuities to support a variety of use cases including monetization. I’ll be continuing to develop the SASH proposal as a part of the Demuxed community on Github. In particular, I’d love to hear any feedback you have on the v0.2 proposals!

Players

We won’t spend too much time looking at the libre player market (there’s room there for a whole other blog post), but we’ll take a look at some of the decisions you’ll have to make when choosing a web player.

The critical decision you need to make when selecting a player is to select one with the appropriate level of abstraction for your business goals - do you want a full player framework with styling, plugins, and other bells and whistles, or just a framework to allow playback which you can build your own player around? Here’s a couple of options that demonstrate those different ends of the scale.

Video.js

Video.js is a comprehensive HTML5 video player framework with plugins, styling, and comprehensive support for HLS and DASH built in. VideoJS is developed in the open on GitHub, and is licensed under the Apache V2 license.

Video.js is a great option to consider when building a libre delivery chain. There is already good support for libre codecs and containers, and it even works with libre codecs with ABR behavior if described correctly in a DASH manifest.

Adding support to Video.js for something like SASH would also be simple - Video.js has spent significant effort splitting out HTTP based ABR behavior into a module designed to support future manifest formats, this component is called Video.js HTTP Streaming (VHS), and it’s also open source and licensed under the Apache V2 license.

Another great thing for our libre video chain is that there’s already a OGV.js plugin for Video.js. This means that we can use a single video player with a consistent experience, to automatically fall back to an OGV.js polyfill’d experience on browsers which don’t support libre codecs natively.

HLS.js

At the other end of the playback spectrum is HLS.js. HLS.js isn’t a complete player solution, instead is a library which provides ABR (in the form of HLS) support to the HTML5 <video> element. This is helpful if you want to build your own player from the ground up with complete control over styling, buttons, plugins etc.

While adding support for SASH seems unlikely, adding support for something like WebM VP9 in HLS would certainly be simple and provide us a stepping stone to a later completely libre solution. HLS.js is also licensed under the Apache V2 license.

Player Problems

While we have some great options for players, we actually don’t have a complete picture. Our OGV.js polyfill solution will work well to plug gaps in fundamental playback, but as of today there’s no way to support ABR technologies in a polyfill.

ABR in modern browser works by using the SourceBuffer APIs to pass chunks of video and audio to the HTML5 Media Element, unfortunately these capabilities don’t exist today in polyfills like OGV.js. This means that in order to deliver the premium experience that we’re aiming for to all users, we’ll have to build SourceBuffer like APIs into OGV.js or alike.

Libre Video Chain Proposal

Let’s take the pieces of the puzzle we’ve managed to piece together, and see where we’ve managed to get to with our libre video chain. I’ve broken this down into 2 sections - what we can achieve today, and what we can work towards.

Today

By leveraging the following libre technologies , we can reach over 90% of desktop browsers, and over 80% of mobile browsers:

- VP8 with Vorbis, or VP9 with Opus

- WebM container

- Video.js with OGV.js polyfill

Unfortunately we can’t offer an ABR technology today, but an easy interim solution would be to make changes needed to support WebM in HLS while we build a long term strategy.

“Soon”

In the meantime, it’s in the interest of everyone who uses the internet to work towards having a universal open video delivery chain. This gives the opportunity to reduce cost of delivery, reduce complexity, reduce data usage, and increase adoption of libre technologies on the internet.

In a few years, it would be amazing to see the industry move towards a toolchain which looks more like this:

- AV1 with Opus

- WebM Container

- SASH based ABR

- Video.js with OGV.js polyfill with ABR support

Summary

We’re in an exciting phase of evolution in online video. Support for libre codecs and containers have never been available on more browsers and devices, and we have Javascript engines powerful enough to drive polyfills on platforms we can’t reach natively. We have an upcoming codec which actually stands a chance of providing universal libre codec support in the future.

We can already build a compelling video experience using only libre technologies, but we have some way to go to build experiences which are on par with the patent-encumbered MPEG technologies which dominate the marketplace today. Mux continues to invest in open technologies to apply them to our delivery strategy where they can provide the best user experience possible.

Header image: Elliott Bledsoe used under Creative Commons Attribution 2.0 Generic license.